I wanted to do a write up on this stuff to mark one year of using ClaudeCode. This document is just me logging, mostly for myself, an intense few years of working with AI tools as a software maker. If you're reading this - thanks for having a look and I hope it's somewhat interesting. I'm not using any AI to write it, review it, or suggest any ideas for it.

My first record of coding with AI was in March 2023 when I was poking around with ChatGPT and wanted to experiment with their API, where you could send a payload and get a response. I was interested in learning Rust at the time too so I killed two birds with one stone and "paired" with Chippity to hack up a little web-app.

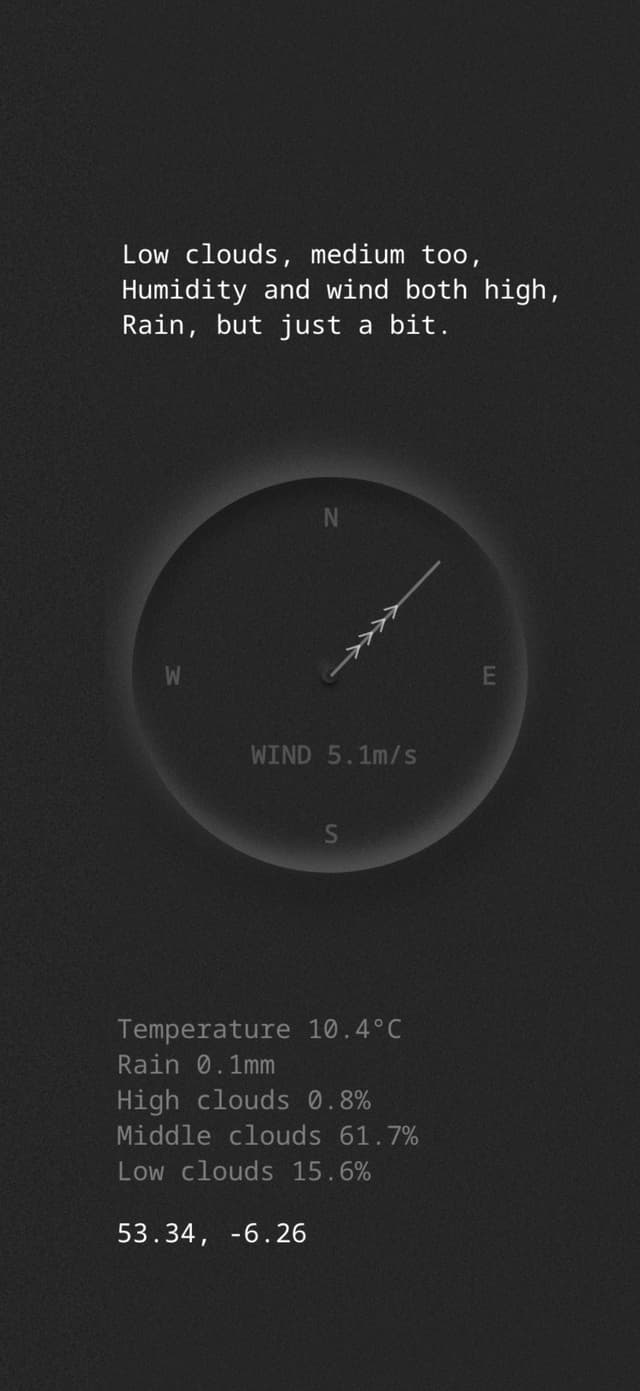

It took the weather forecast from the Yr.no API and sent it to the GPT API to get converted into a haiku with a little serverless function. I had no idea I'd be heavily using Anthropic's Haiku model a year or two later. I'm glad I have an actual record in a public repo of my first crack at it because it was technically my first time paying for LLM tokens.

After that I started yet another portfolio website that never got completed, but I was using ChatGPT to help me build a static web framework with Rust, with the goal being that it would work with web components and do some magic with CSS and bundling. It never really had a clear goal and never got completed.

The next big timeline event for me was in April 2024 when I was hit by tech layoffs. At the time when I was laid off I had been in this career for 18 years. It's never fun being laid off, and this was my second time, but that fresh start mentality had me considering career changes. Wedding and real estate photography were up there as serious ventures and I already have more than enough serious photo gear to go down that path without needing to spend more, and I was ready to get away from desk work.

At the time I was on a digital detox buzz after reading Digital Minimalism by Cal Newport, and had been using my phone in black and white for months to kill the habit of unconsciously unlocking it. It's a very effective way to do it.

Luckily, I opted to by myself a new Macbook and start taking side projects a bit more seriously instead. In hindsight that layoff was probably the best thing that happened to me during this AI revolution - the timing was perfect, because I started building things just as OpenAI, Cursor and Anthropic were getting really good.

Around the time I was laid off I started following YouTubers like Pieter Levels and Marc Lou, and Marc's shipfa.st product got me thinking that I should build a boilerplate repo that I'd use as a jump-off for all of these other products I was going to start building. Youtube Premium is one of the things I kept even when unemployed - it's very underrated.

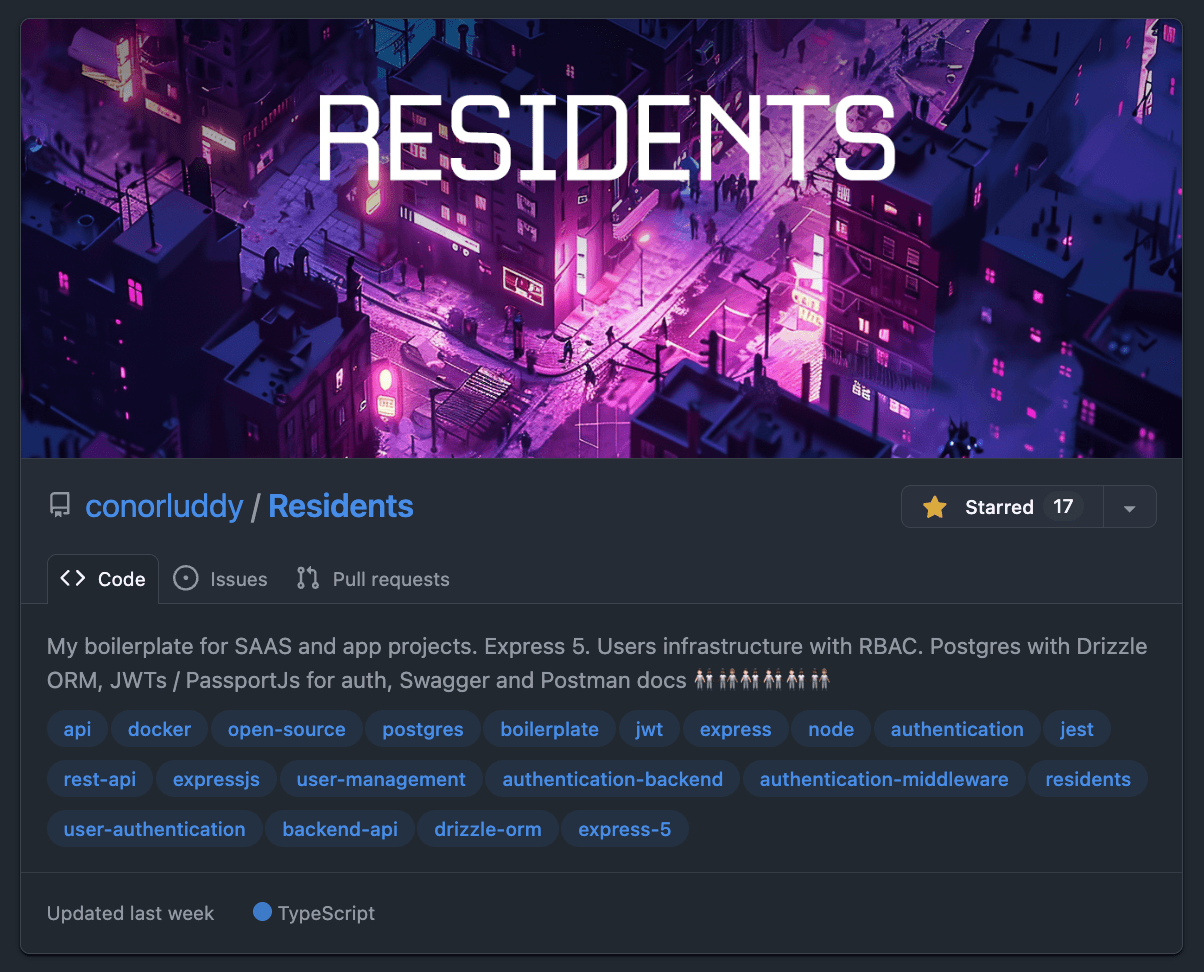

I had never built a backend setup with user auth from scratch before, and I suddenly had a lot of free time, so I started building Residents. The motive being that most apps and products will need to manage users, so lets build the lowest-common-denominator backend API that I can reuse like a cookie cutter.

I learned a bunch about auth, Express, middleware, JWTs, refresh tokens, security, role based access and API testing. The satisfying part about building this was that I was the only person working on it, so had the freedom to make it fully Typescript typed, and well covered by tests.

At that point I would have been discussing the codebase with Chippity (I just searched in my ChatGPT dashboard and I had set up one of those custom GPTs and called it "ResidentOne"). I was zipping up code repositories and uploading them as an asset for the GPT, using it to get feedback on the code and advice on how to do auth securely, and then iterating that way.

I'm still maintaining Residents, and it's a special one for me because it has been through every phase of my AI journey so far. Starting with Chippity, then Cursor and Continue, and now being occasionally updated by the latest Claude models. It's likely the last ever repository I'll ever have coded the majority of "by hand", because it's no longer efficient to do that.

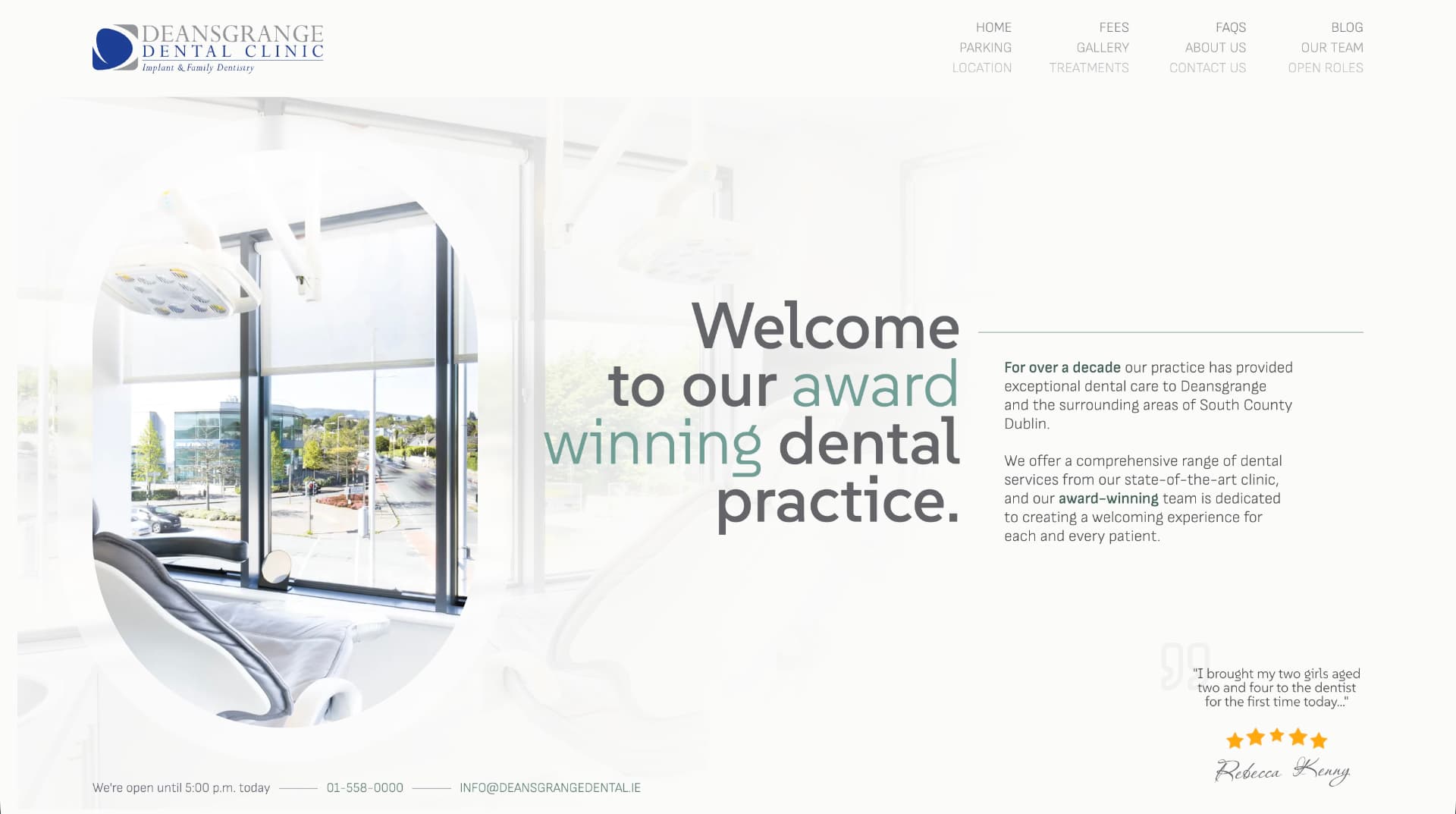

Despite planning to take a few months off and decide what to do next, I ended up starting a new job with Nory.ai in May '24. I got busy in there very quickly and the personal side products slowed down to a crawl, but I was gradually building a wedding portal website for a friend and designing and building a NextJS-Wordpress website for a Dental clinic.

Actually while looking at that site again to get this screenshot, I remembered that AI helped me to find some unusual ways to do scroll tracking and responsive visibility with native CSS variables.

In trying to piece this timeline together, I dipped into my emails and found a "Making the most of Cursor" email from 4th September 2024, followed by a "Receipt from Anthropic" 14 days later. Cursor is a great product, but it was the Claude/Sonnet API that was doing the AI work, so I never ended up subscribing to Cursor - I went straight to the source and I've been an Anthropic customer since.

There was (and still is) a VSCode plugin called Continue, that you could use to get a similar setup to Cursor, with a chat panel in the IDE that you use to specify files or lines of code to pass to Claude, and this became my daily driver at work and at home. Rather than paying a fixed subscription to Cursor I was just paying directly for token usage from Anthropic.

Nory.ai are obviously a product company who lean into AI and using these tools in there was encouraged. That's where I'd start to really notice the rift between engineers who were leaning into it and those who had no interest in using it.

I used to share things on Slack in work when I found useful or interesting ways of working with AI while coding. I remember writing up at the time about how pairing with AI gets you into the flow-channel quicker. The flow channel is a theoretical graph that you can Google, with Challenge on the Y axis and Skill on the X. When you're working "in the zone" in the sweet spot of skill and challenge, anxiety and boredom are minimised and you get very focused. AI tooling can augment your skills and reduce most challenges, so I always found it got me into that flow super quick.

At this point I was still in chatbot territory, with Continue making code changes to files from within VSCode. Agentic coding with ClaudeCode hadn't arrived yet, but there was (and still is) a tool called Aider that gave AI command line access in a similar way. I'm still on that same Macbook, so I can just ask Claude to check the file system and figure out when I installed that...

"Aider was first installed on October 19, 2024" -- ClaudeCode

At the time when I was trying it out we had a little bit of tech debt in the frontend code in Nory. I recorded a Loom video of me using Aider to decompose a React component into 12 smaller React components. Not complicated work, but definitely repetitive and time consuming, creating prop types for them all and extracting shared code etc.

I shared the video thinking it was awesome what could be done with Aider. I think I only had a couple of replies, one being "Command line access for AI - what could go wrong...".

At the same time our head designer in there was loving Cursor and giving us a dig out with updating our design system tokens in the codebase. It was interesting to see how some engineers shun away from AI tooling while some designers jump in head-first.

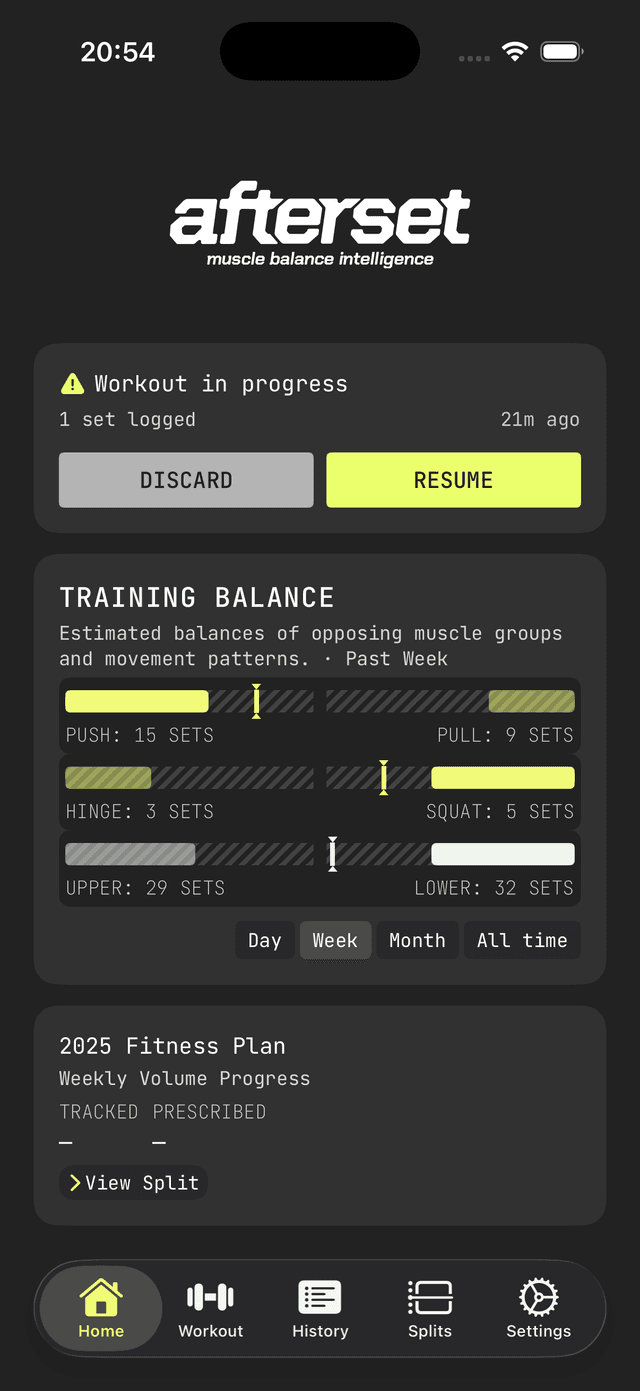

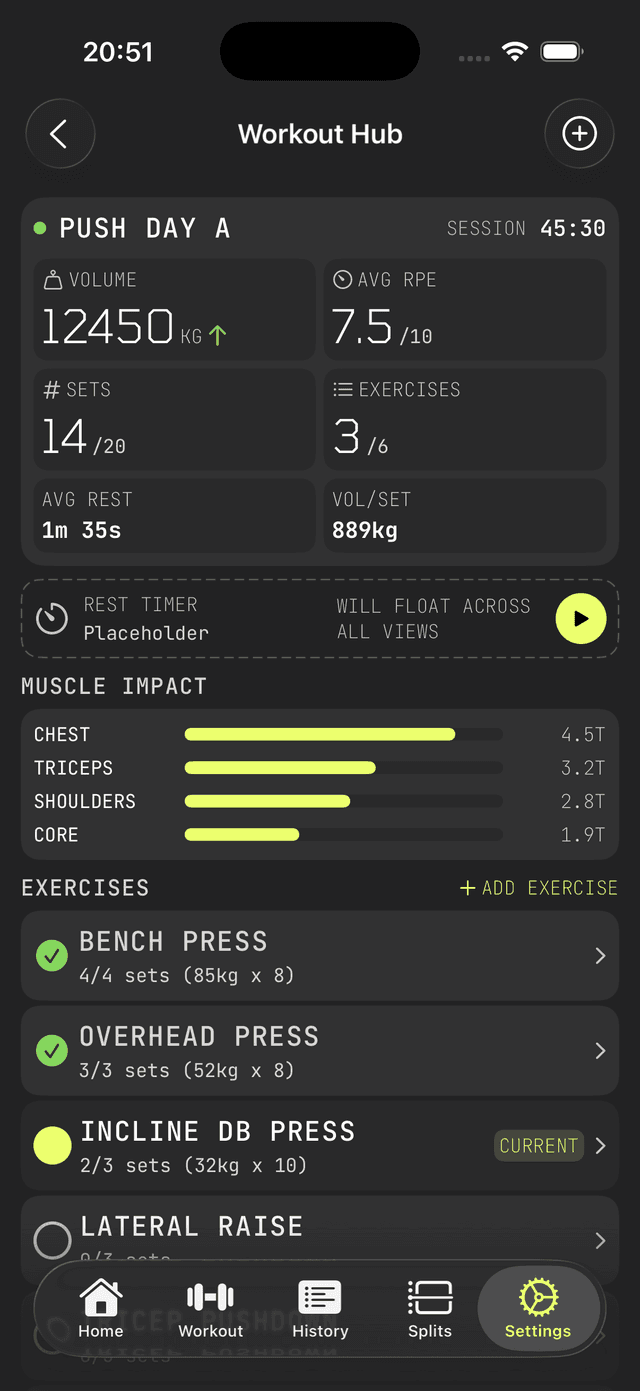

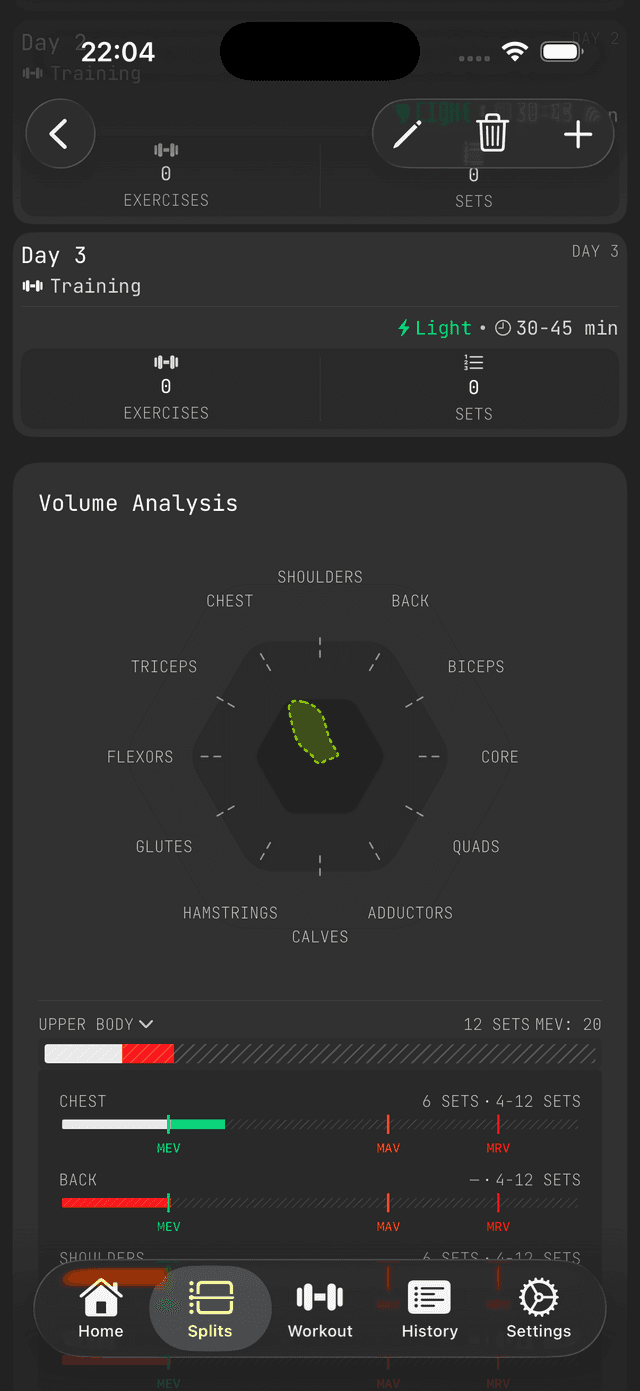

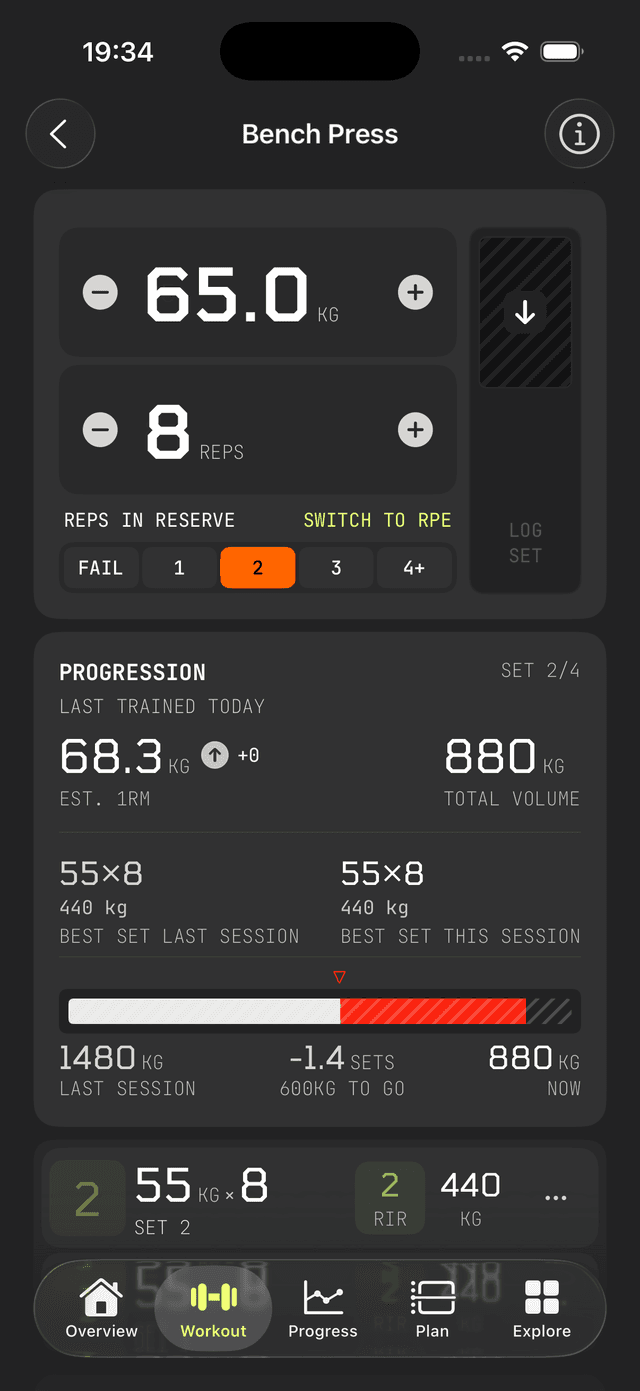

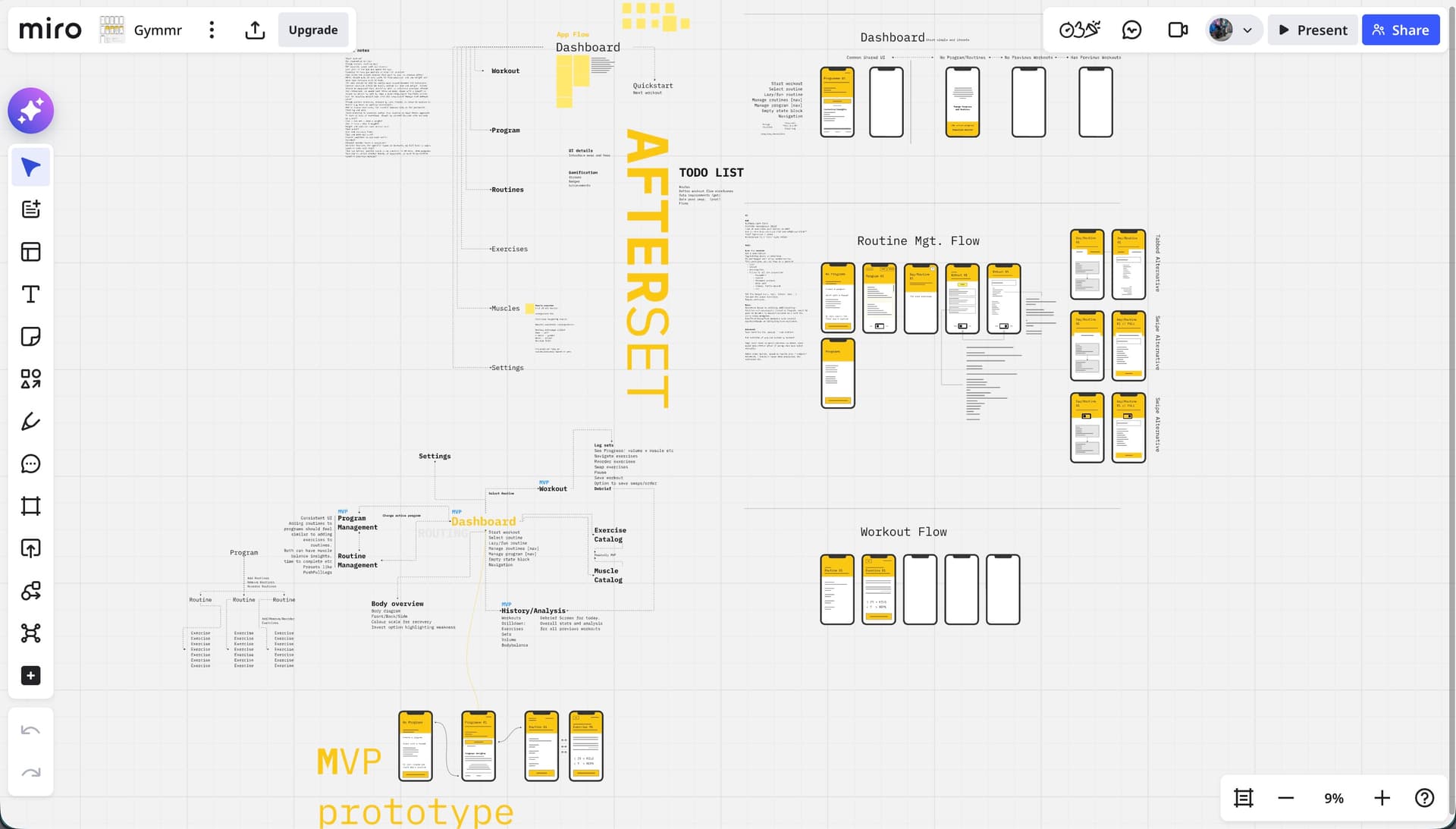

Back over in side-project territory I had started building a gym app I was calling Afterset (named that because that's when you log your set.). I realised I could also use AI to generate data for muscles and exercises - not just write code. So now it was trivial to create hundreds of exercises and get AI to define what proportion of each exercise would hit each muscle. A recurring theme with working with AI is that the more you use it the more "oh you can do THAT with it too" ideas naturally occur.

I started learning about graph data structures and graph databases through building Afterset. Without AI I'd never have had the patience or time. Using Residents as a boilerplate, Claude and I built an Arango database that we'd use as a back-end for a weight training app, where each set of an exercise could be roughly attributed to each muscle involved, so that as you build up training logs you'd be able to see what muscles are being used too much or too little. I got stuck into Miro and started wire-framing. The app/front-end of Afterset had no plan yet and I'd never tried any iOS/Swift development at this point. I started trying out React Native but ended up parking the app for the time being.

The next thing on this timeline was ClaudeCode. I was still using Claude via Continue a lot at this stage, at work and at home, still using the pay-as-you-go model via the API. ClaudeCode came out as a developer preview in late February 2025 - I'm writing all of this on its first birthday. I started using it from day one, but at the time it was also paying by the token/API, and it absolutely burned through money. It was way more expensive than using the API, but even then it felt like a completely different beast to Cursor and Continue.

I still kept Continue as my primary tool due to the cost of using ClaudeCode, but if I was stuck on something I'd reach for ClaudeCode and it would nearly always nail it. I'd definitely have been using ClaudeCode full-time back then if I'd had a room full of cash to burn.

In spring 2025 I left Nory and went back to Toast to work on a React Native product. I had a lot of React experience by then but no significant iOS or Android experience, making it a good move for learning some new tech. It aligned well with me wanting to build my own products and iOS apps. By now most big tech companies had started to push these new AI workflows too - so we had pilot programs for Cursor, followed soon after by ClaudeCode. ClaudeCode has been my main tool in my day-job for about 9 months now too and writes most of my code. I'll write a separate more technical post about how I work with it on the day to day.

By now ClaudeCode had the new subscription model, and I was using the basic €20/month subscription on my personal account, but was hitting the usage limits too quickly. Soon I bumped up to a Claude Max account at around €100/month. I think if there's ever an important time to pay this much for a subscription, it's during these formative years of AI.

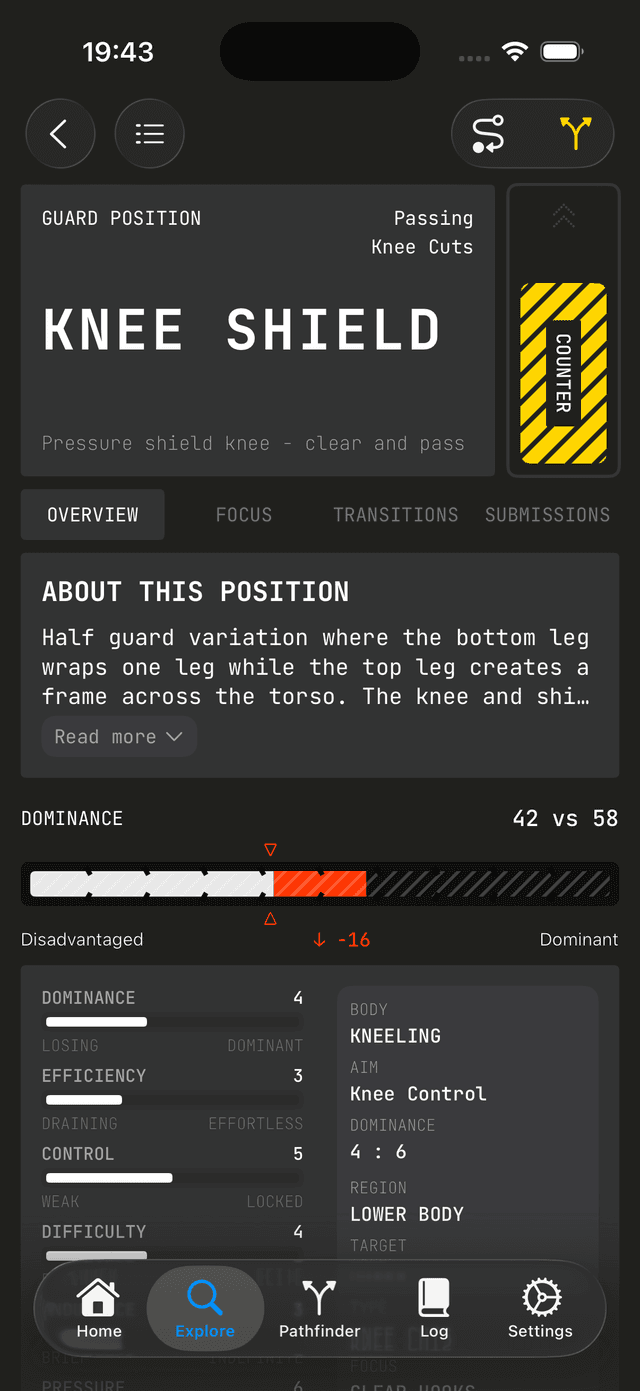

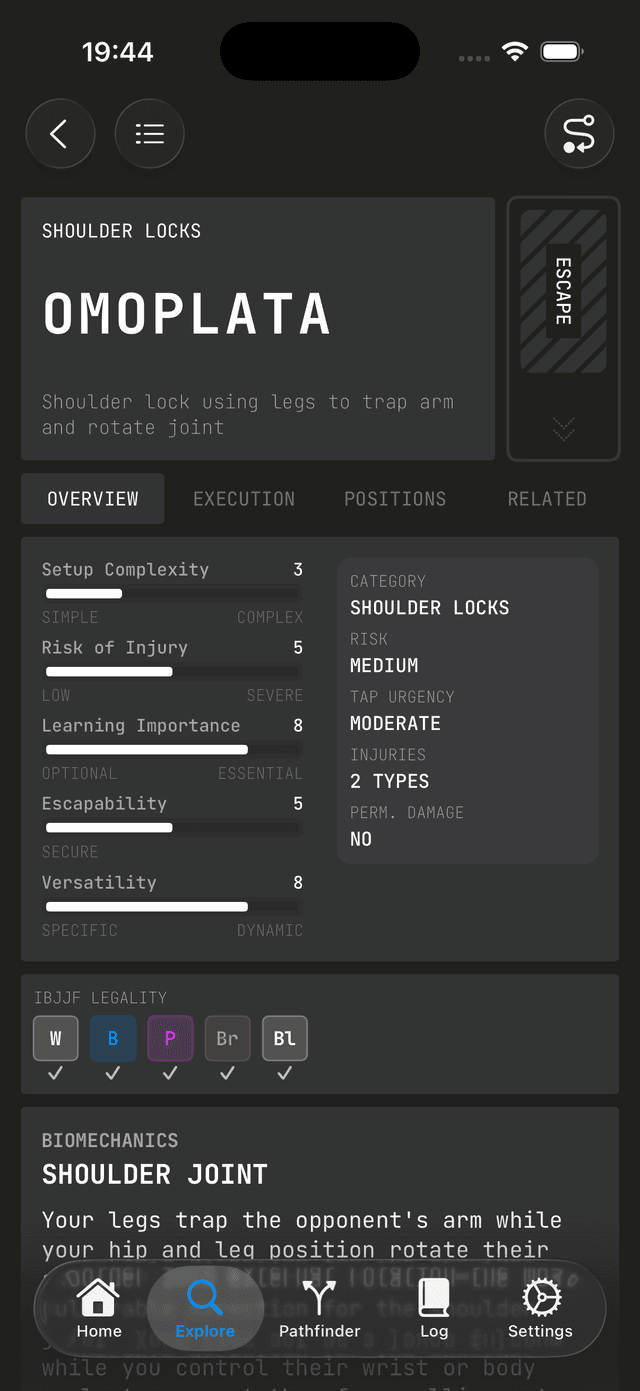

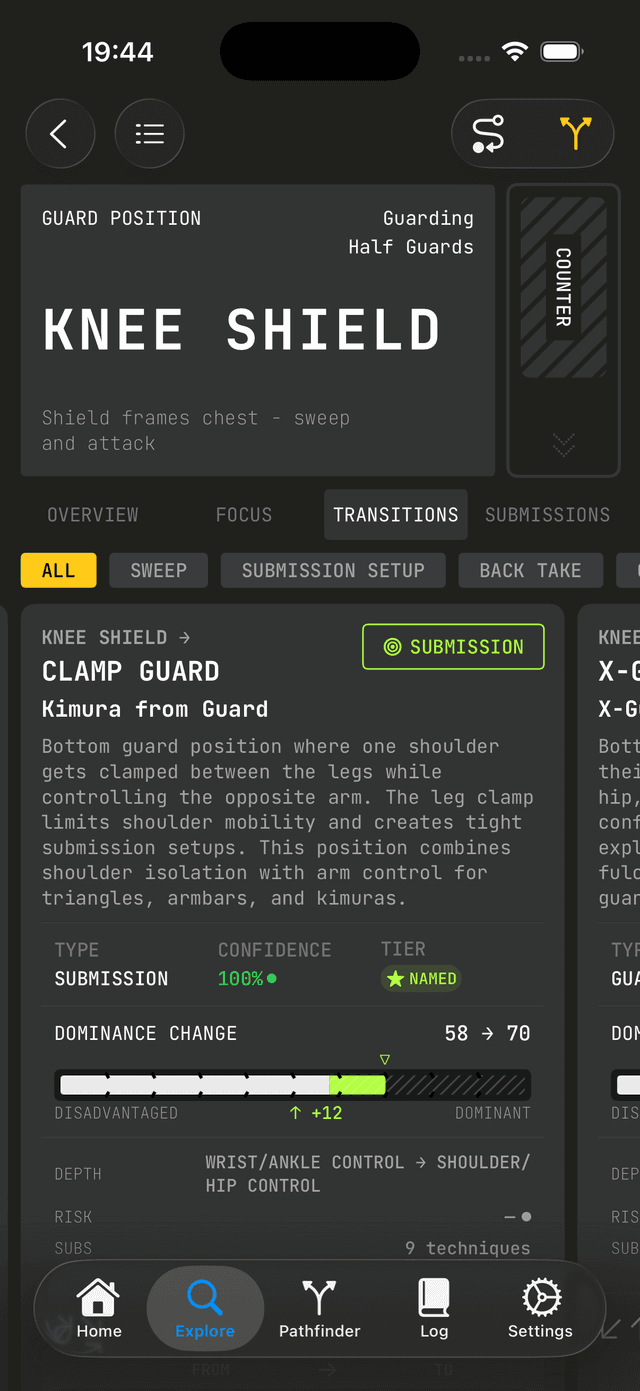

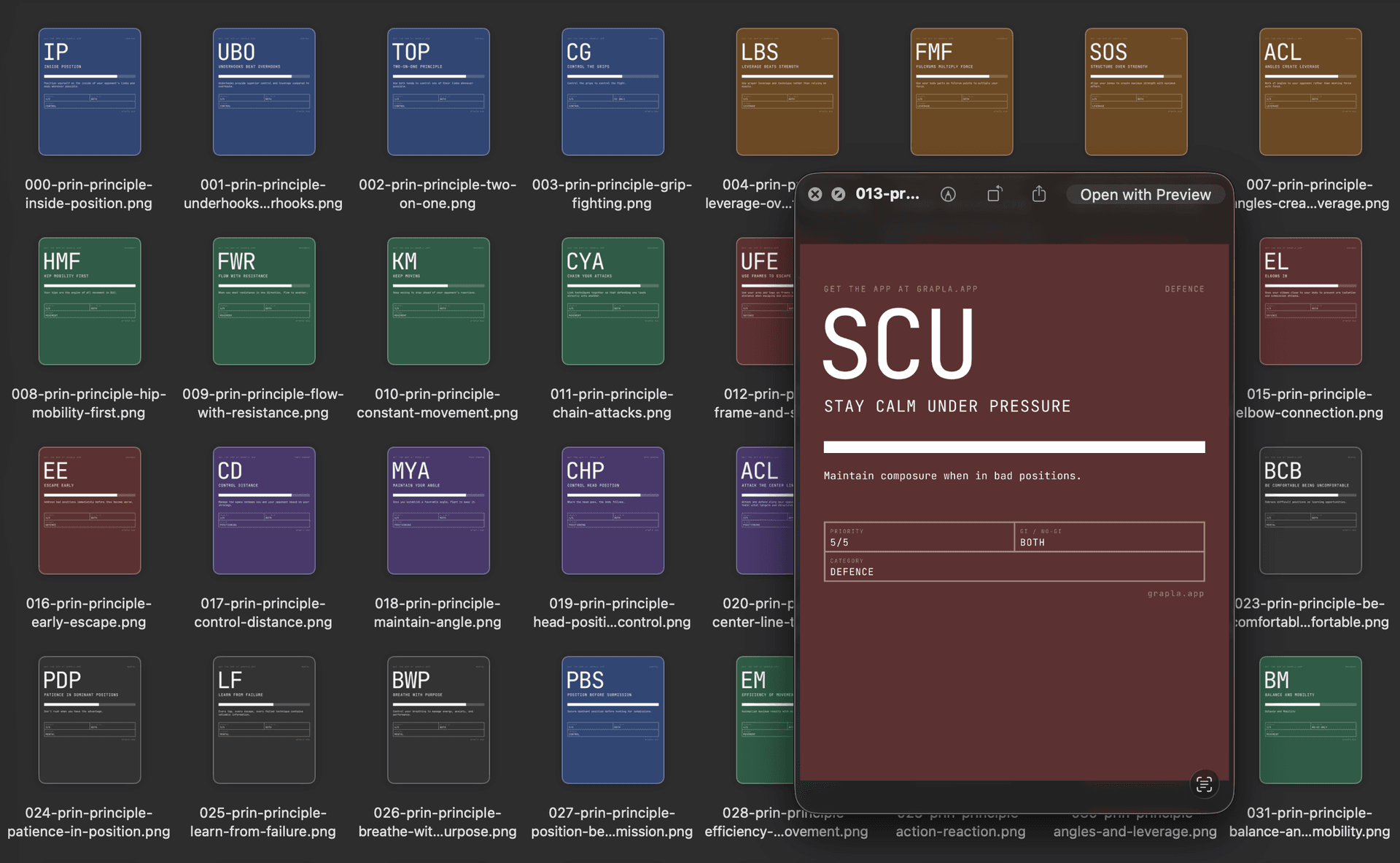

I was hitting the usage limits because I had started building a new iOS app called Grapla and the flow state with ClaudeCode was getting highly addictive. I ended up committing code to my personal Github account on 360 days of 2025. Grapla turned into a massive rabbit-hole that I'm still building, but it's teaching me a lot.

"The Grapla repo began on April 30, 2025" -- ClaudeCode

I won't get into the details of that app here because it'll end up doubling the length of this essay. It'll be a good subject for a series later. But it taught me a massive amount of iOS development, and triple that again in ways of building with Claude. One recurring theme in both work and side projects is the speed that you can build tooling and scripts to support the main project, and these often grow into their own side projects.

For iOS projects I ended up building XC-MCP (an MCP that wraps XCodebuild so that Claude can build your app without having to eat 40,000 tokens in output logs), ios-simulator-skill (now with 500+ stars on Github - (but it's just a Claude-Skill port of XCMCP)), and another tool called Persuader - intended to "persuade" Claude to consistently generate data to match a schema.

Back in the day job I was still sharing ways I was finding useful to work with Claude, and sharing tips and shortcuts and insights. I gave a couple of talks on MCP servers, one virtually and one in a room full of real people in our Boston office - explaining what MCPs are for and why they can be valuable and/or save tokens and money. This boils down to "context engineering" and optimising how context and tokens are used - although since promoting MCPs back then, it's now better to use Skills and not to have any MCPs or plugins active unless you're actively using them. They add unnecessary tokens to every session, costing you money even if you're not using them.

At the time though, I was all about the MCPs, and thought I'd try to build an MCP that would spawn other MCPs, like a boilerplate/manager type thing. I called it ContextPods because the pods were supposed to be little modular MCPs that would handle context for the agents. Never ended up using it for anything in the end, but all of these were practice and taught me different things.

The Grapla app was taking a long time but had also produced a lot of reusable patterns and tooling. One Saturday I had been thinking about the Afterset app that hadn't been touched since I started Grapla. The JSON data that had been generated for it was ripe for revival, and since I'd last looked at the project I'd gone and learned a bunch about iOS development. Now I knew it didn't even really need a back-end - everything could just live on the phone and work offline.

I decided to see if we could start an iOS project for Afterset on a weekend and have it submitted to the AppStore by the Sunday evening. Apple reviewed it within 24 hours, and it live and downloadable by Monday evening. It was far from perfect, but it was proof that all the work on my other app had paved the way for getting apps built fast.

The problem now was that Afterset was live as a weekend project, but I couldn't just leave it there like that, it needed a bunch of work to make it actually be any good. The POC of getting an app live in a weekend had turned into a time trap. So Grapla got parked for a while and I tried to get Afterset up to a state where it could be used in the gym without having too many missing features. It needs a lot more work, but it's another app that I can do a complete write-up on soon.

I finally parked Afterset (it still needs more work) and got back to Grapla, and while Claude was building me out a separate NextJs marketing website for it we got chatting about ideas. All of the data for the app is JSON, so it can be plucked into other projects too. With the marketing website done we started discussing how we could generate other marketing assets.

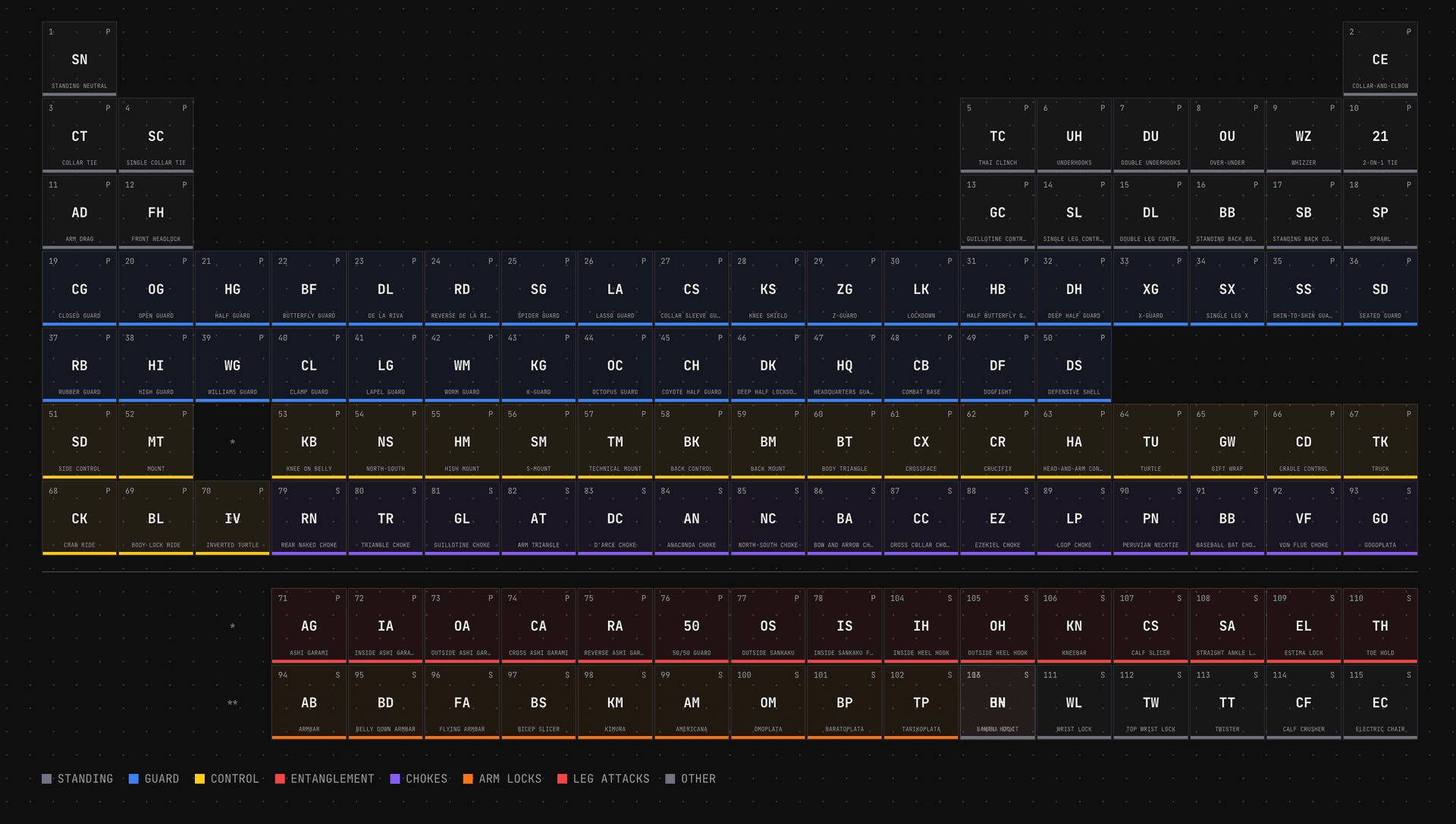

I often tell Claude to create experiments using prompts like "build us ten variations of X" and we'll cross pollinate ideas and polish up something that comes out of it. In this case it randomly came up with a periodic table of Jiu Jitsu that turned into a super feature for SEO on the website - but that also evolved into something that could render social media images for hundreds of the positions in our app data.

So we had an endpoint that rendered images in social media format, and a script that would hit it for each entity in our data. Hundreds of images generated in under a minute, using nice colour palette generated by ChromaJs. Claude then went a step further and created flow videos with ffmpeg. Grapla is a Jiu Jitsu app, so these videos are all just flow sequences ending in submissions, perfect for the marketing Tiktok/Insta accounts.

If anything can be done on a command line, Claude can do it and automate it.

The latest thing I've been exploring is augmenting Obsidian with Claude. I'm seeing if I can build a Zod and data graph layer over it that Claude can plug into. Then I'll be using it as a central knowledge base that contains documentation, specs and idea/discovery content for all of my projects. Claude will be able to extract a strongly typed JSON representation of it all and hopefully able to cross-pollinate ideas and resources between everything.

Before I go too deep down that rabbit hole though I need to get Grapla launched.

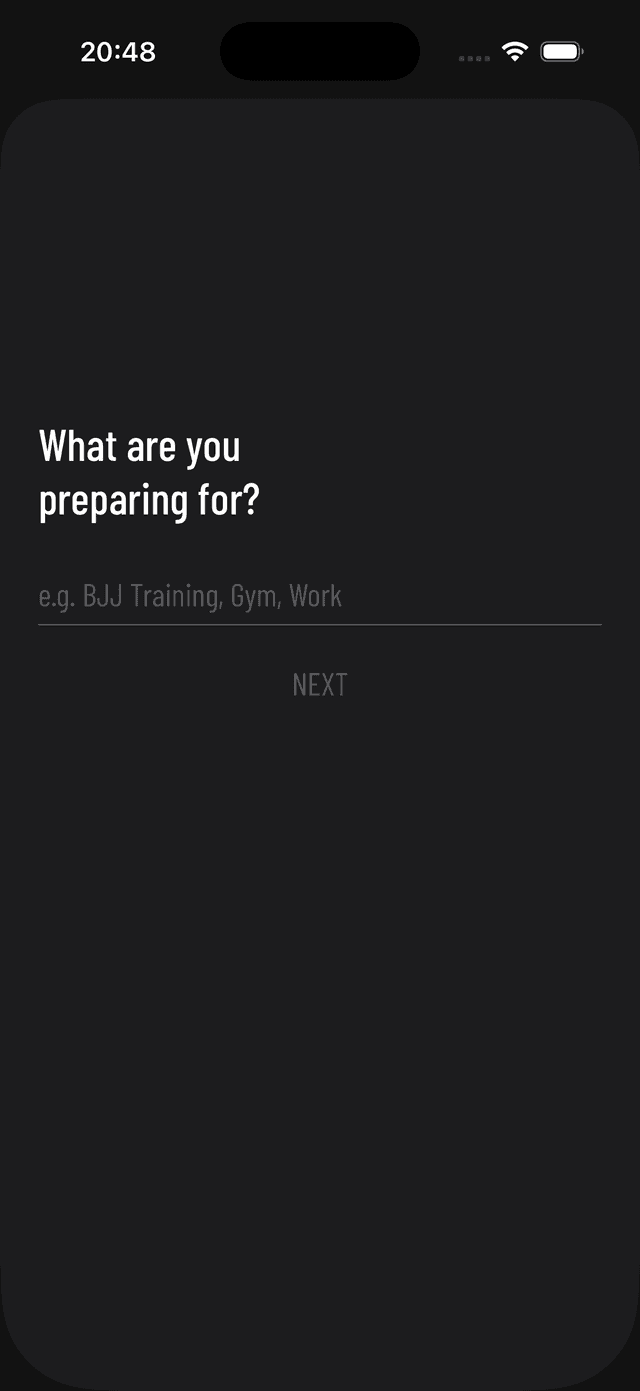

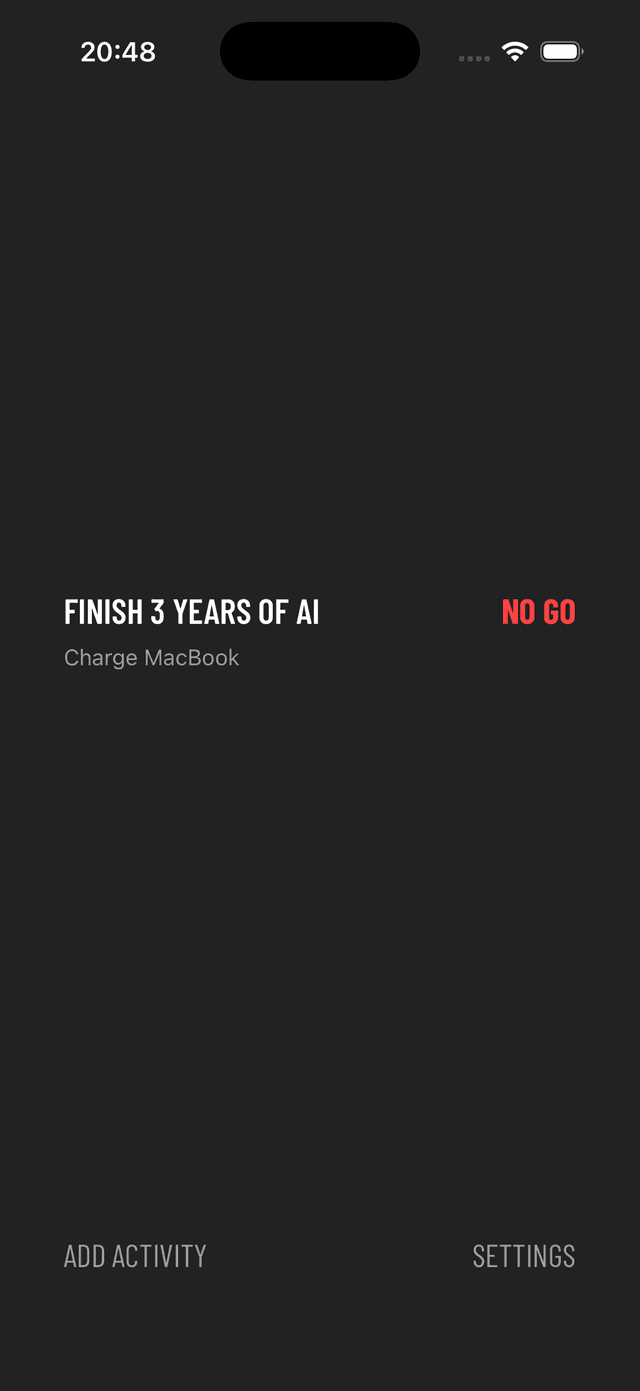

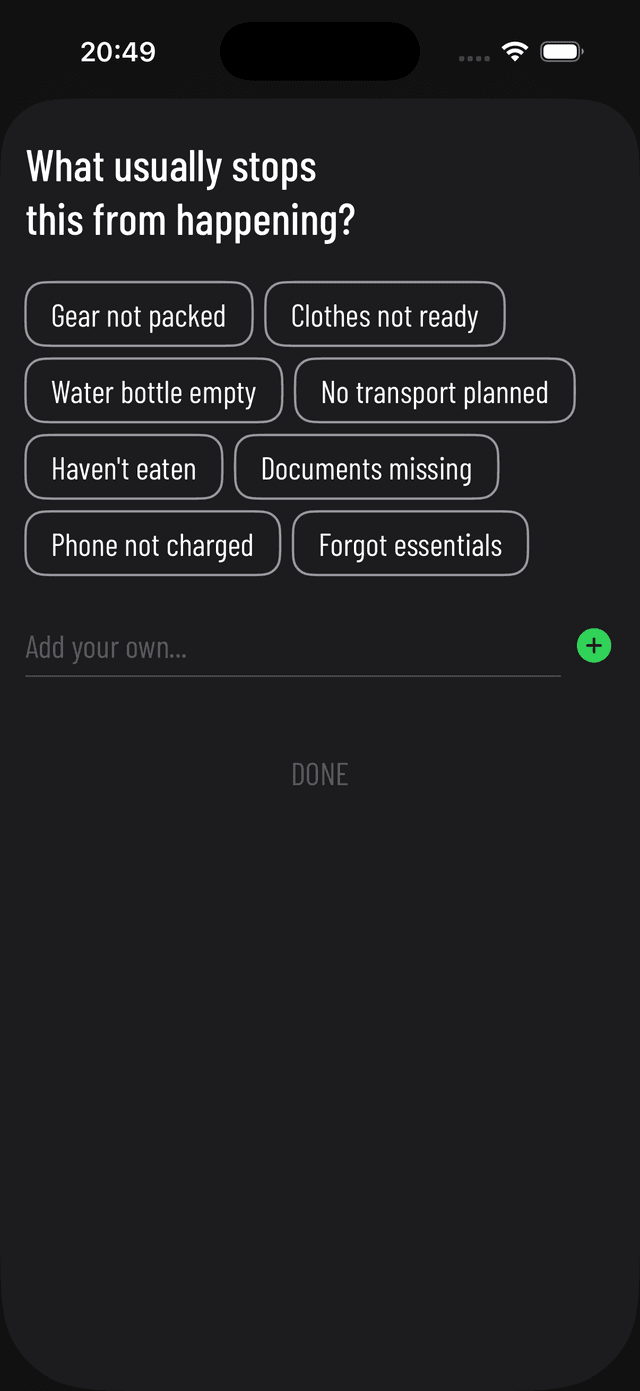

Somehow between starting this write-up and finishing it, I ended up building another minimal app called FrictionList that's currently being reviewed by Apple.

These little CSS phone mockups and the timelines at the edges (only on big screen) were also built by Claude, purely for this article - they were fun.

I'll never be dogmatic about ways to work with AI - it's a very open-ended and subjective experience and everyone has their own approach. But I'll be sharing what works for me, and what feels like useful details of all of the different things I worked with it on over the past few years. More ramblings coming soon...